| home Synthesizers FPGAs Games Software Hardware Pinball Tech | |||

| Downloads /zips/c64_ga_image.zip | |||

Image conversion for the Commodore-64 using a GA |

||||

Bitmap images on the Commodore-64I'm doing quite a few projects related to retro computing. Most of that is focused specifically on the Commodore 64 computer. Recently I have been experimenting with some of the graphics modes of the C64 to fix a few bugs in my emulation code. This got me thinking what the limits are that can actually be archived with the VIC-II chip (the chip that generates the video picture inside the C64). As I'm not really good at creating pixel art, I needed another approach to get something decent looking on the screen to experiment with. That is when I got the idea of making a picture converter instead. This way I can use an existing picture and convert into a format supported by the C64 and see how that would have looked back in the days. The maximum resolution the C64 is capable of displaying is 320 by 200 pixels (ignoring the fact that it is possible to open the borders with tricks). The horizontal resolution can be halved to 160 pixels in some of the graphic modes to increase the amount of simultaneous colors. Other constrains are the fixed color palette of 16 colors. So that is what we need to work with. Taking a source picture with a maximum resolution of 320 by 200 and convert it into a representation using the 16 colors of the C64 palette.

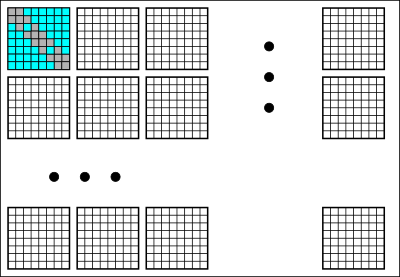

Bitmap limitationsOn the C64 it is not possible to freely use all of the 16 colors for each pixel. There are certain limitation that where implemented in the machine to reduce the amount of memory required for storing a picture. This allow high resolution pictures to be produced on a 8 bit machine with limited storage space and little available memroy bandwidth. A image of 320 by 200 with a color depth of 4 bits (to address all 16 colors) would require 32000 bytes of storage or almost half of the total available memory. This is more memory than the VIC-II video chip can directly access (which is one out of four banks each 16 KiByte in size). The graphic screen is split up in blocks equal in size to character on the character screen. So the bitmap is split up into 40 columns by 25 rows for a total of 1000 blocks. Each block is 8 by 8 pixels in size and can use only two colors out of the total of sixteen colors. It is possible to increase that to three colors freely choosen per block and one global color, but that will result in also halving the resolution. So for converting a high resolution image, a careful selection needs to be made which two colors to use for each individual 8x8 pixel block.

Genetic Algorithm to convert imagesAlthough the task doesn't look too complicated, there are actually 112 different choices of two colors possible. The pixels in a block can form 2^64 different combinations. So a complete exhaustive search is really not possible. So we either need some form of heuristics to speed up the search or maybe a smarter way to do the search for a good match. It is not immediately clear what would be good heuristics, so I've choosen to go for a better search method. The method I have used is called genetic algorithms (GAs for short), which is a form of stochastic search. A lot can be written about these techniques. The short story is that you generate lots of candidate images. Then with operations inspired by genetics like combining different parts (cross-over) and slightly modifying the images (mutation) you try to come with better images over time. The best images found previously are used as basis for the next images that are generated. This slowly is improving the final image quality over many iterations. The layout of a C64 screen lends itself well for these techniques as each individual block is independed. So combining partial solutions with cross-over can work well here. It still takes a long time though. All examples in this article have been the result of generating, evaluating and combining over one million candidate pictures. First resultOk so we have a way to search in the image space for the best candidate blocks and then combine them into the final image. The picture below is the result. It is rather disappointing really as the quality is rather poor. Running the search algorithm a lot longer might still improve it a bit, but not by much. Anyway it at least shows that the algorithm works and can produce something on the C64 that is resembling the input.

Adding spatial ditheringThe problem in the previous picture is that the quantisation to the sixteen colors of the C64 palette results in many blocks using only a single color. The color might indeed be the closest available color for these pixels, but the result is a huge loss in detail. The solution is a technique well known in the graphic world called dithering. Here we trade resolution for better color reproduction, but actually also gets some of our details back as it reduces the uniform color resulting from the strong color quantisation. The technique used here is combining 2 by 2 pixels and compare those to a downscaled version of the original with 160 by 100 resolution. The results for this scaled down compare are summed with the original compare result to get a blend that provides some dithering while also retaining some of the details. The result is an improved picture with more detail. Although it is clearly still not the best quality imaginable.

Adding spritesSo what could we do to improve this last result? Until now we only have used the bitmap graphics. However the VIC-II chip can also display sprites or MOBs as they are called in the official documentation. There are 8 of them each 24 pixels wide. That is not enough to conver the complete screen so we need to stretch them. That means each sprite pixel covers two of our bitmap pixels. To keep the resolution of 320 pixels we position the sprites behind the foreground graphics. This gives some weird color choice limitations as pairs of pixels are now interdependent. This could give an pixel artist a headache. However the GA algorithm doesn't care. So a layer of sprites is added and the algorithm is run again. To keep the search time resonable I've choosen a fixed black color (index 0) for the sprite layer. There might be better color schemes available that are not tried by the current implementation. However changing a sprite color would change it for 6 blocks (48 pixels) making it more complex to perform cross-over. The following screenshot shows the result of introducing the extra sprite layer. Especially the eyes and tails of the birds have more detail now.

Temporal ditheringUntil now we only dithered in the spatial domain, but we can also dither in the time domain. Alternating between two different frames with slightly different colors can increase the color depth. The different colors also will blend a bit together allowing for more color combinations resulting in a richer color palette. This technique has a drawback as the refresh-rate of the screen will be cut in half a bit of flickering will occur. When using the Turbo Chameleon cartridge the blend mode can be selected that completely eliminates the flickering. The difference in color value between pixels can be measured and scored in the genetic algorithm fitness function. This way there is some control over how much flickering is produced, but might also limit the number of mix colors that can be produced. The screenshot below show the result of interlacing two frames.

Final resultThe temporal dither trick can be combined with sprites. Alternating between two bitmaps and two different sprite layers. In this case the number of mix colors available in a 8 by 8 block increases to 9. The following screenshot shows the final result of the converter.

Another imageHere is another image converted with the same configuration. Using both temporal and spatial dither and a sprite layer.

Closing remarksThe VIC-II chip allows many tricks for example by vertically splitting the screen. Some timing tricks allow the generation of extra bad-lines. This allows reading new color information during rendering of a row and opens the door to use many more colors in the same 8 by 8 pixel block. Other possible tricks could be changing the x-scroll location having blocks overlap on different lines. Or switch between hires and multicolor to have more possible colors while retaining some of the resolution advantages of hires. The same genetic algorithm will be able to convert to these other formats if the correct output frame generator is added to the code. It is really a general approach for searching for a source to target pixel mapping. One final note and disclamer I have to make. It was a little experiment I've put together in a few evenings. So I don't have a ready made converter application. You can download the source-code by clicking here if you really want it. It might take some tweaking or hairpulling to make it work though. The code is not well structured or documented and might have some bugs as well. I've included a .prg file though that you can run on a C64 to show that the final result does actually work on a real machine. Note I didn't bother to develop the sprite multiplexing code. So for running the result on a real C64, you are limited to using bitmaps. |

This is the source picture used for the experiment. It is scaled down version of an originally hd format bird wallpaper I found online. It is scaled down from the original size of 1920 by 1200 to the 320 by 200 resolution supported by the C64. There is no purpose using a higher resolution picture as those details will be lost anyway and will only consume extra memory and processing power.

Another image used to show of the final converter. Also a downscaled version of some random wallpaper.

I wrote the converter in C/C++. I've found the loadPNG library quite usefull for handling PNG images. The SDL library was used to display the C64 bitmap while it is slowly converted into the target image. GAs require a very good random number generator. I've used one developed by the University of California. See the random.c file in the download package. I've not bothered with any of the headerfiles and just added an extern for the single function I use directly in my code. Yup ugly I know! |

|||